The History Of Chatbots – From ELIZA to ChatGPT

Chatbots have been around for a while now, but it’s only in recent years that they have gained real popularity among users and businesses alike.

Mostly, this change of awareness for chatbots and conversational interfaces came with developments in artificial intelligence and machine learning, as well as with the increasing popularity of messaging apps.

Today, chatbots are used in various industries and for different use cases. In this article, we will have a look at the history of chatbots, what chatbots are exactly and where they are coming from.

Let’s get started.

What are chatbots?

In essence, a chatbot is an Artificial Intelligence program that chats with you. It can chat with you, provide information and support, book things for you and much more, how awesome is that!

They are used to reproduce powerful interactions with users, to aid business processes, to gain information from large groups, as a personal assistant among others. Chatbots are also used by search engines to lag the web and archive new pages for future search.

Sometimes bots are used for malicious purposes as well, like transmitting computer viruses or artificially increasing views on YouTube videos or web articles.

A chatbot is digital with text and messaging or voice-based applications. They help different groups of people or individuals to put their inquiries via text or voice.

History of chatbots – chatbot development over the course of time

The first chatbot ever was developed by MIT professor Joseph Weizenbaum in the 1960s. It was called ELIZA. You’ll read more about ELIZA and other popular chatbots that were developed in the second half of the 20th century later on.

In the year 2009, a company called WeChat in China created a more advanced Chatbot. Since its launch, WeChat has conquered the hearts of many users who demonstrate an unwavering loyalty to it. It is a highly thriving social media platform.

Through its platform, it has made it easy to create very simple chatbots. It has grown to be an example of the most favored ways for marketers and employers to reduce the work they do as they interact with customers online.

Though it has implications and is less performant than today’s messaging apps such as Facebook Messenger, Slack, and Telegram, it doesn’t mean that you cannot construct a very smart bot on WeChat. Chumen Wenwen Company, founded in 2012 by a former Google employee, has built a very sophisticated bot running on WeChat.

Early in 2016, we saw the intro of the first wave of artificial data technology in the design of chatbots. Social media platforms like Facebook enabled developers to build a chatbot for their trademark or service so that customers could carry out some of their daily actions from inside their messaging platform.

The introduction of chatbots into a community has brought us to the time of the conversational interface. It’s an interface that soon won’t demand a screen or a mouse. The interface will be entirely conversational, and those communications will be indistinguishable from the conversations that we have with our friends and relatives.

To fully explain the massiveness of this soon-to-be reality, we’d have to go back to the earliest days of the computer, when the desire for artificial intelligence technology and a conversational interface first began.

History of chatbots – From ELIZA to ChatGPT

ELIZA

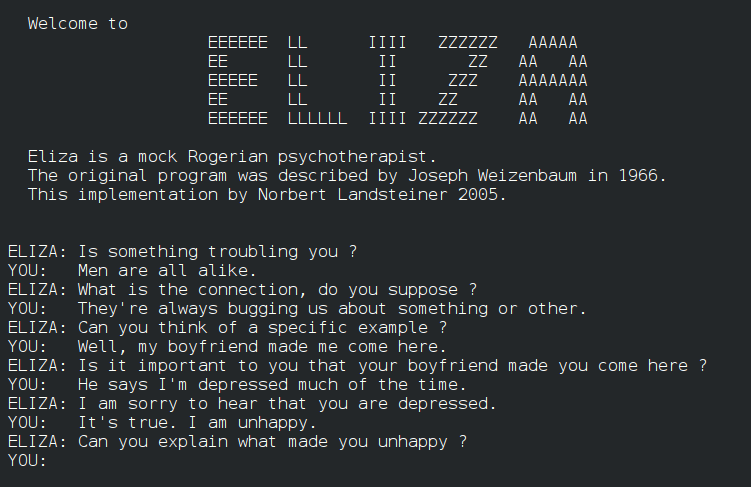

ELIZA was the very first chatbot as mentioned above. It was created by Joseph Weizenbaum in 1966 and it uses pattern matching and substitution methodology to simulate conversation.

The program was designed in a way that it mimics human conversation. The Chatbot ELIZA worked by passing the words that users entered into a computer and then pairing them to a list of possible scripted responses. It uses a script that simulated a psychotherapist. The script proved to be a significant impact on natural language processing and unnatural intelligence, with copies and variants protruding up at academies around the country.

However, Weizenbaum was troubled by the reaction of users. He intended ELIZA to be a mere caricature of human conversation, yet suddenly users were confiding their most profound thoughts in ELIZA. Experts were declaring that chatbots would be indistinguishable from humans within a few number of years.

Weizenbaum rejected the notion that machines could replace human intellect. He argued instead that such devices were just tools, and extensions of the human mind. He further stressed that computers’ understanding of language was entirely dependent on the context in which they were used. Furthermore, Weizenbaum argued that a more general computer understanding of human language was not possible.

In the decades that followed, chatbot makers have built upon Weizenbaum’s model to strive for more human-like interactions. Passing the Turing test has grown to a common goal, which tests new bots’ conversational talents against a board of human judges. The hardest thing in the Turing test issue is that there’s no limit on what people can discuss.

PARRY

PARRY was constructed by American psychiatrist Kenneth Colby in 1972. The program imitated a patient with schizophrenia. It attempts to simulate the disease. It is a natural language program that resembles the thinking of an individual.

PARRY works via a complicated system of assumptions, attributions, and “emotional responses” triggered by changing weights assigned to verbal inputs. To validate the work, PARRY was tested using a variation of the Turing test. It was in the early seventies when human interrogators, interacting with the program via a remote keyboard, were weak with more than random accuracy to distinguish PARRY from an original unreasonable individual.

Fifty years ago Kenneth Mark Colby was the only psychiatrist thinking about how computers could contribute to the understanding of mental illness. He thus began the project „Overcoming Depression” that lasted until his death in 2001.

Also interesting: The 10 Most Important Benefits Of Chatbots For Companies And Users

Jabberwacky

The chatbot was created by developer Rollo Carpenter in 1988. It aimed to simulate a natural human conversation in an entertaining way.

Jabberwacky has led to other technological growth. Some individuals use it for academic research purposes through its webpage since its origin.

The chatbot is considered to use an AI technique called “contextual pattern matching.”

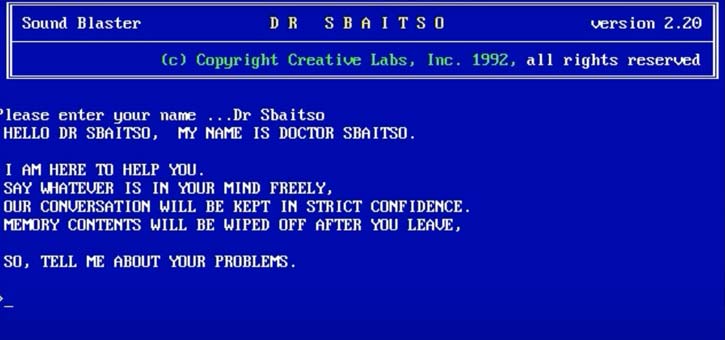

Dr. Sbaitso

Dr. Sbaitso is a chatbot created by Creative Labs for MS-Dos in 1992.

It is one of the earliest efforts of incorporating A.I. into a chatbot and is recognized for its full voice operated chat program.

The program would converse with the user as if it was a psychologist. Most of its responses were along the lines of “Why do you feel that way?” rather than any sort of complicated interaction.

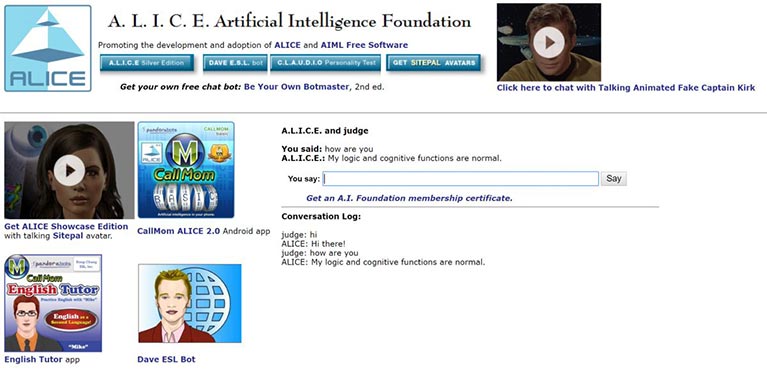

A.L.I.C.E. (Artificial Linguistic Internet Computer Entity)

A.L.I.C.E. is a universal language processing chatbot that uses heuristic pattern matching to carry conversations. In 1995, Richard Wallace pioneered the construction of ALICE. It was formerly known as Alicebot because it was first to run on a computer by the name of Alice.

The program works with the XML schema known as artificial intelligence markup language (AIML), which helps specify conversation rules. In 1998, the program was edited in Java, and in 2001 Wallace printed an AIML specification. From there, other developers drafted free and open sources of ALICE in different programming languages and a variety of foreign languages.

The program simulates chatting with a real person over the Internet. Alice is a young-looking woman in human years and tells a user her age, hobbies and other fascinating facts, as well as answering to the user’s dialog.

If you want to give it a try, you can do so here.

Just remember, this was released in 1995, so don’t expect too much in terms of user interface and design.

SmarterChild

The SmartChild was in many ways a precursor of Siri and was developed in 2001.

The chatbot was available on AOL IM and MSN Messenger with the strength to carry out fun conversations with quick data access to other services.

It suits Microsoft also built its own SmarterChild, years later after most people stopped using AIM which targeted 18- to 24-year-olds in the U.S. the account suites particular conversation

Siri

Siri was formed by Apple for iOS in 2010; it is an intelligent personal assistant and learning navigator that uses a natural language UI. It paved the system for all AI bots and PAs after that.

A patent application by the United States Patent and Trademark Office details a new Apple service where users could make inquiries and conversation with Siri through Messages. The new patent is similar to a published late last year, but now includes deeper integration with audio, video, and image files.

Similar to other texting and Facebook Messenger Apple’s patent describes a Siri that could perform current duties without the user having to chat aloud. That could be helpful in several public spheres.

They could reply to a text, audio, images, and video when transferred to it by the user. Apple said this would result in more fruitful interactive experience among a consumer and a digital assistant.

The patent provides a few examples of a conversation held between Siri and a user in Messages, with the user asking questions.

Google Now/Google Assistant

Google Now was launched at Google Inch in 2012. It answers questions, performs actions through requests made to a set of web services and makes recommendations.

It was part of a package of updates and UI modifications for mobile search, which included a female-voiced portable assistant to compete with Apple’s Siri.

Google Now was initially a way to get contextually appropriate information based on location and time of the day. It evolved to become much more complicated and elaborate, with a broad range of content categories delivered on cards.

Sometimes it refers to us as predictive search. Currently, it’s built for use in smartphones and has been upgraded to accommodate several features.

Google Now was replaced by Google Assistant in 2017. Today, the assistant is part of a more aggressive Google search growth strategy. The idea is simple, Google wants to provide information in an easy-to-read format before you even know you need it.

Also interesting: Why Google Assistant Is Leading The Voice Assistant Race

Cortana

Cortana was first demonstrated at Microsoft’s Build 2014 developer conference, and it became directly integrated into both Windows phone devices and Windows 10 PCs.

This program uses voice recognition and relevant algorithms to get and respond to voice commands.

For someone to get started, they must type a question in the search box, or select the microphone and talk to Cortana. If a person is not very sure of what to say, they will see suggestions on the lock screen, as well as in Cortana home by selecting the search box on the taskbar.

Cortana can perform tasks like reminders based on time, places, or people, send emails and texts, create and manage lists, chit-chat, and play games, find facts, files, locations, and info among others.

Alexa

Alexa is an intelligent personal assistant developed by Amazon. It was introduced in 2014 and is now built in to devices such as the Amazon Echo, the Echo Dot, the Echo Show and more. There is also an Alexa app and more devices from third-party manufacturers that have Alexa built in to them.

All you have to do is say “Alexa, play some music” or “Alexa, find me an Italian restaurant” and she will help you out.

Using nothing but the sound of your voice, you can search the Web, play music, create to-do or shopping lists, set alarms, stream podcasts, play audiobooks, get news or weather reports, control your smart-home products and more.

To add to the capabilities of any Alexa-enabled device, Amazon allows developers to build and publish skills for Alexa using the Alexa Skills Kit (ASK). You can download skills for free with the Alexa app.

ChatGPT

ChatGPT is a large language model trained by OpenAI. It was founded by the OpenAI team in 2021. It is designed to assist users in generating human-like text based on given input. ChatGPT can be used for a variety of tasks, including conversation generation and language translation.

The model is trained on a massive amount of data, allowing it to generate text that is often difficult to distinguish from text written by a human. ChatGPT has been praised for its ability to generate natural-sounding text and its potential applications in a variety of fields. By the way – this abstract was created by ChatGPT.

The next generation of the GPT model offers enhanced capabilities in natural language processing. ChatGPT-4 features advanced algorithms and has been trained on an even more extensive dataset, resulting in higher accuracy and coherence in the generated texts. It can handle more complex conversations, provide more precise answers, and cover a broader range of topics. ChatGPT-4 was released by OpenAI in March 2023.

Google Gemini

Google Gemini, formerly known as Google Bard, is an innovative AI language model developed by Google in February 2023. Imagine having a virtual assistant that not only answers your questions but also writes creative texts, engages in conversations, and even translates in real-time. Google Gemini utilizes advanced algorithms and has been trained on massive amounts of data to generate incredibly accurate and natural texts. It is designed to be easy and intuitive to use, whether you are a developer looking to create a voice-controlled app or a user seeking quick answers to queries. With Google Gemini, Google pushes the boundaries of AI technology, making it accessible and useful for everyone, and opening up exciting possibilities in the field of artificial intelligence.

Microsoft Copilot

Microsoft Copilot is an intelligent assistant developed by Microsoft to help users work more productively. Since November 2023, Copilot has been available to Microsoft enterprise customers and integrated into Bing. It supports applications like Word, Excel, PowerPoint, and Outlook within Office 365. Leveraging artificial intelligence and machine learning, Microsoft Copilot automates and simplifies various tasks. Simply say, “Copilot, summarize this document” or “Copilot, analyze this data,” and it efficiently assists. Copilot can write texts, analyze data, create presentations, and even compose emails. It accesses data and documents stored in your Microsoft 365 environment to provide context-aware suggestions and solutions.

As you can see, chatbots have come a long way. We hope that our short history of chatbots was useful and if you have any questions about chatbots or Conversational AI, or would like to discuss specific use cases for your business, don’t hesitate to contact us.

Further Resources

Gaglio, S., Lo, R. G., & SpringerLink (Online service). (2014). Advances onto the Internet of Things: How Ontologies Make the Internet of Things Meaningful. (Springer e-books.) Cham: Springer International Publishing.

MacTear, M., Callejas, Z., & Griol, D. (2016). The conversational interface: Talking to smart devices.

Perez-Marin, D., & Pascual-Nieto, I. (2011). Conversational agents and natural language interaction: Techniques and effective practices. Hershey, PA: Information Science Reference.

Warwick, K., & Shah, H. (2016). Turing’s imitation game: Conversations with the unknown.

More Knowledge For Chatbots And Voice Assistants

Retrieval Augmented Generation (RAG)

July 10th, 2024|

Is a voicebot right for my company?

June 18th, 2024|

What is Generative AI?

June 11th, 2024|